For the first time in human history, nearly every person is under daily surveillance that has been made possible by ubiquitous connectivity that we now have access to. Unfortunately, along with connectivity has come an entire industry based on privacy violations. Metadata about what Internet services you use allows network operators to build individual profiles that inform targeted advertisements. Network operators can build complex social graphs by observing communications between people, allowing for deep insight into friendships and social groups and the structure of companies, information that many would rather be kept private. Even seemingly harmless network services such as DNS, the network protocol responsible for translating domain names to IP addresses, can reveal individual network usage or even what types of device you are using to connect to the world.

At INVISV, we build privacy-focused network infrastructure. In our decades of experience, we have noticed that a simple, elegant system architecture is often present in systems that offer practical, deployable privacy solutions. We have come to call this common design pattern The Decoupling Principle.

The Decoupling Principle

The Decoupling Principle is a well-known but unwritten law: to ensure privacy, information should be divided architecturally and institutionally so that each entity (such as a company) has only the information they need to perform their relevant function. Architectural decoupling entails splitting functionality for different fundamental actions in a system, such as decoupling authentication (proving one should be allowed to use the network) from connectivity (establishing session state for communicating). Institutional decoupling entails splitting what information remains between non-colluding entities, such as distinct companies or network operators, or between a user and network peers. In other words, the Decoupling Principle suggests always separating who you are from what you do.

Decoupling Example: Relay

Previously we discussed how INVISV Relay works to decouple information about your Internet traffic.

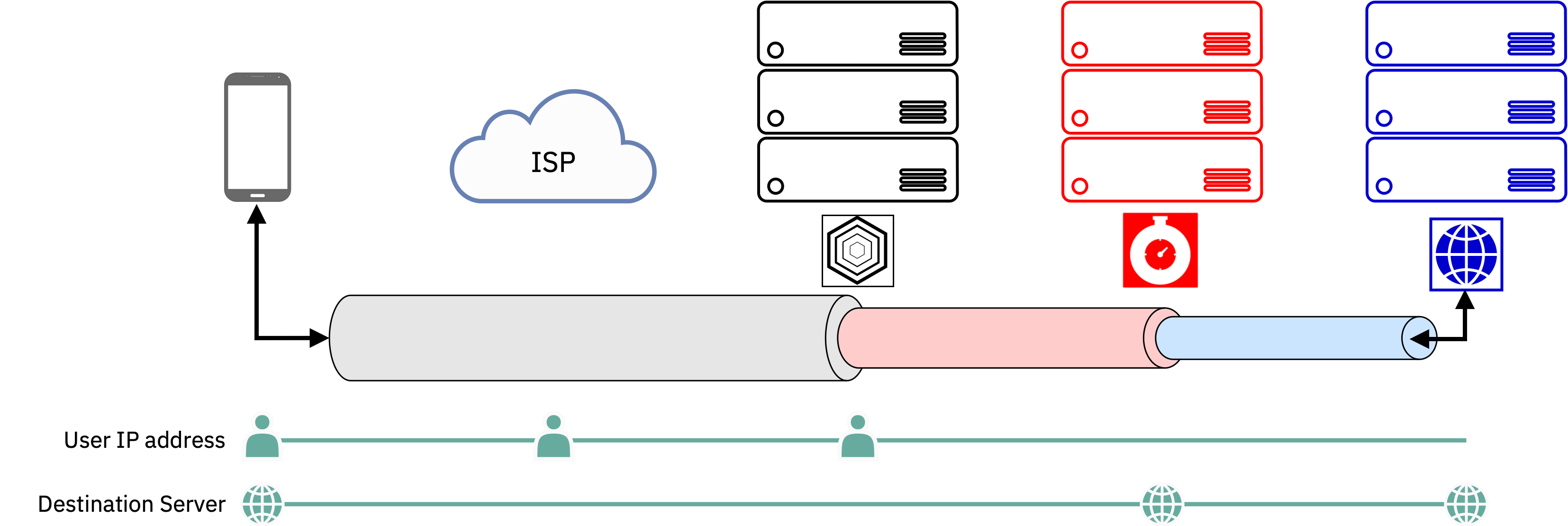

The design of Relay is such that INVISV only sees the source side of a network connection and Fastly only sees the destination side. The information about each side is decoupled, and so no company sees both sides. This is fundamentally different from a VPN, as we discussed in our previous post: VPNs can see both sides of a connection and so architecturally provide no privacy protection beyond promises made by the provider.

Analysis Language

To enable system analysis, we can create some simple definitions regarding user identity and user data. We define ▲ as sensitive user identity known by some entity and likewise △ as non-sensitive user identity information. We define ● as sensitive user data and ⊙ as non-sensitive user data. We can then define tuples to describe knowledge held by some entity in a network e.g., (▲,⊙) would mean that this entity has access to sensitive user identity and non-sensitive user data.

Examples of the Decoupling Principle in Practice

Mixnets / Tor

A classic example of a system that adheres to the Decoupling Principle is that of Mixnets, created by David Chaum. This approach introduced the notion of multi-hop relaying across mutually-non-cooperating entities (mixes). A message is encrypted using the mix’s public key before being sent. The mix decrypts using its private key and forwards to the receiver or to another mix. Mixnets were later adapted for real-time Internet communications in work on Onion Routing, and later improved in the popularly-deployed Tor system.

Mixnet analysis:

- The sender necessarily has access to all sensitive information: (▲,●)

- The first mix knows sensitive user identity and non-sensitive user data: (▲,⊙)

- Subsequent mixes (up to mix N-1) know non-sensitive user identity and data: (△,⊙)

- The receiver and final mix knows non-sensitive user identity and sensitive data: (△,●)

We see that Mixnets and Tor follow the decoupling principle, and thus no entities other than the sender has access to both sensitive identity and sensitive data information.

PGPP

To receive service in today’s cellular architecture, SIMs uniquely identify themselves (and thus their users) to operators. This is now a cause of major privacy violations, as operators sell and leak identity and location data of hundreds of millions of mobile users.

To remedy this situation, we created Pretty Good Phone Privacy (PGPP). PGPP leverages the Decoupling Principle to achieve location anonymity in the cellular architecture. Traditionally, the cellular architecture relies on the International Mobile Subscriber Identity (IMSI), a permanent, globally-unique identifier that is stored on a SIM card for both billing and authentication functionality as well as mobility and connectivity. As billing and authentication effectively creates a binding between the IMSI and a user’s identity, their subsequent usage and physical movements can easily be tracked and attributed to them simply as a passive byproduct of operating a cellular network.

PGPP decouples billing and authentication from the cellular core (the NGC in 5G; PGPP’s design applies to all modern cellular architectures), altering it to use an over-the-top oblivious authentication protocol to an external server, the PGPP-GW, that can be operated by a second organization, while leaving mobility and connectivity functions in the cellular core as they are today. By shifting billing (and the user’s human identity ▲H) and authentication, IMSIs are altered, which we denote as the non-sensitive network identity △N which are identical or shuffled periodically. This ensures unlinkability to individual users as they connect and move through the network.

PGPP Analysis:

- User: (▲H,▲N,●)

- PGPP-GW: (▲H,△N,⊙)

- NGC: (△H,△N,●)

Oblivious DNS

At INVISV, we have personal experience in applying the Decoupling Principle to enhance privacy in large systems that underpin much of modern connectivity. It is well known that DNS leaks information that an Internet user may want to keep private, such as the websites they are visiting, user identifiers, MAC addresses, and their IP address. This naturally leads to the user’s recursive DNS resolver holding an incredible amount of private information.

To address this design flaw, we designed Oblivious DNS (ODNS), techniques from which were later adopted by Oblivious DNS over HTTPS (ODoH). (We use this approach in Relay.) In Oblivious DNS protocols, the client obfuscates its queries before issuing them to the normal recursive resolver, which is often run by their ISP. The obfuscated queries then reach an oblivious resolver, a server that has been configured to be the authoritative server for the obfuscated queries, and holds the encryption keys needed to decrypt the original query. This server then acts as a recursive resolver for the plaintext query.

Oblivious DNS Analysis:

- Client: (▲,●)

- Resolver: (▲,⊙)

- Oblivious Resolver: (△,●)

- Origin: (△,●)

In Oblivious DNS, the sensitive user identity (IP address) is known to the recursive resolver, but not their sensitive data (queries); while user sensitive data (queries) are known at the ODNS resolver, but not their sensitive identity (IP address) as a result of the layer of recursion. ODNS decouples sensitive user information across multiple parties, as user privacy is maintained so long as the recursive resolver and the ODNS resolver do not collude.

Other Recent Examples: Relay

Other systems use the decoupling principle to protect user privacy. INVISV Relay and Apple iCloud Private Relay, for example, decouple user identity (IP address) from their web traffic by relaying user traffic through network infrastructure partners (Fastly, etc.) before connecting to the ultimate destinations on the Internet. The system uses two proxies, the ingress proxy is run by Apple, which allows them to see user IP addresses but not user traffic. The egress proxy, run by infrastructure partners, has visibility into user traffic but does not see the user IP address.

Relay Analysis:

- User: (▲,●)

- Relay 1: (▲,⊙)

- Relay 2: (△,●)

- Origin (△,●)

In Relay, the user’s identity (their true network-layer identifier) is known to Relay 1, but their request and the subsequent reply (the data) is not known as it is hidden in an encrypted stream. Relay 2 does not have knowledge the user identity, but may learn limited information about the user’s request (such as the FQDN of the origin server). Finally, the Origin only learns of the user’s request.

System Examples That Don’t Follow the Decoupling Principle

VPNS

There are many examples of systems that aim to protect privacy but create new vulnerabilities and new points of surveillance. Such systems often fail to decouple sensitive information and thus offer privacy only under the assumption of trust in some network entity. Classic examples include centralized VPN and security processing services.

Traditional VPN Analysis:

- Client: (▲,●)

- VPN Server: (▲,●)

- Origin: (△,●)

By funneling all traffic through a single third-party, such systems create a single point of observation. This design requires trust in the VPN server to voluntarily not violate privacy, rather than preventing the need for such trust architecturally.

Moving Forward

INVISV is focused on making privacy a default expectation for Internet services. We continue to apply the Decoupling Principle to systems that we all rely on.

An extended edition of our analysis of The Decoupling Principle will be published next month.